Research Projects

Cloud Augmented Reality Server

We are developing a location-based AR platform where a server performs all rendering and geo-positioning, allowing for a higher fidelity experience without increasing the strain on the endpoint (in this case, a phone). Using Unity, environments are dynamically positioned based on the client’s GPS location and relative pose and rendered to a video stream to be sent in real-time back to the client to overlay over their camera feed. The Unity WebRTC library is used to establish a peer-to-peer streaming connection with the client. An embedded Websocket server (using websocket-sharp) is also created to locally handle signaling between multiple clients and the server.

In the demo below, you can see a recreation of UCLA’s quad with some bouncing balls to demonstrate the ability to simulate physics that would begin to stress a device-only system. With improved networking technology like 5G, we hope to see latency reduced to a point where a server-based platform’s performance is indistinguishable from a purely local implementation.

In the following demonstration, the same platform is used detect tags in the real world which are mapped to digital research posters in an AR environment.

Server-Based Drone Controller

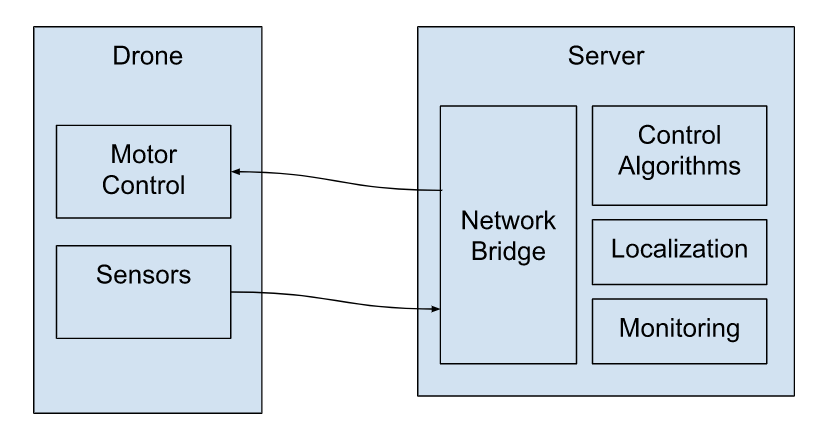

With advances in 5G and computing power, computationally-intensive applications can be offloaded to servers to take advantage of additional processing power without sacrificing much latency. The goal of this project is to develop a full framework and demonstration of drone control and localization over the network, with all computation being performed on a central server. This project was divided into three sections: a C++ based framework for server-side application development, an object follower platform to demonstrate sensor data streaming and realtime drone control, and a SLAM platform to demonstrate localization within a prebuilt map.

Information for sensors, drone state (i.e. battery, motor engagement, etc.), and video feed are sent to the server and motor control commands are sent back to the drone. The following video shows the 3D map generated from feature keypoints extracted from the drone’s video feed. The video also captures the movement of the camera itself, shown in purple.

Remote Robotic Control

We are currently working on developing a feedback controller for a line following car (endpoint) that resides on a remote computer (server). Sensor readings are taken by the endpoint and are sent over WiFi to the server, which then replies with a control input for the endpoint. The networking code was implemented using gRPC, a remote procedure call framework from Google. A video demonstration of this car can be seen below.

We are currently working on adding the capability of measuring the network latency between the endpoint and the controller. We are also working to be able to run the controller and the endpoint on different networks, as well as adding support for multiple endpoints.